On December 18, X-Humanoid officially open-sourcedXR-1, China's first and only embodied VLA(Vision-Language-Action) large model to pass the national embodiedintelligence standards testing, along with the supporting RoboMIND 2.0data foundation and the latest version of ArtVIP.Based on these open-source achievements, the embodied intelligence industry canpursue its most essential needs—enabling robots to truly workeffectively across various application scenarios—propelling China'sembodied intelligence sector into a new era of being "fully autonomousand more practical."

Focused on the core mission of "enabling robots towork and work well," X-Humanoid has built the "Tien-Kung"universal robot platform and the "Huisi Kaiwu"universal embodied intelligence platform. Around the"Tien-Kung" platform, X-Humanoid has released multiple types ofuniversal platforms including Tien-Kung 2.0 and Tianyi2.0, laying the physical foundation for humanoid robots to performwork. The synergy between the embodied "large brain" and"small brain" is another prerequisite for humanoid robots towork effectively. Currently, around "Huisi Kaiwu," X-Humanoid hasopen-sourced embodied large brain-related achievements including the WoW(World of Wonder) world model and Pelican-VL.

This open-source release focuses on the embodiedsmall brain capability—the VLA model XR-1—along with RoboMIND2.0 and ArtVIP, which provide data training supportfor XR-1 and other models.

XR-1—Giving Robots "Instinct," Bridgingthe Gap Between "Seeing" and "Doing"

Currently, the embodied intelligence industry faces a corepain point: while AI technology can accomplish virtual applications like textcreation and video generation, robots often struggle to complete basic tasks inthe physical world like "picking up objects" or "handingthings over." This stems from the disconnect between"visual perception" and "action execution."

Although robots can identify objects, they can only executeactions based on preset instructions—like "students who only know rotememorization." They fail when environments change even slightly. Totackle this technical challenge, X-Humanoid focused on core technologicalbreakthroughs, creating the XR-1 embodied small brain large modelwith "unity of knowledge and action" capabilities.

At the World Robot Conference (WRC) in August this year,X-Humanoid officially launched the cross-platform VLA model XR-1,which features multi-scenario, multi-platform, and multi-taskcapabilities, along with advantages like high generalization.

The underlying technical principle lies in XR-1's threecore pillar capabilities: cross-data-source learning, cross-modalalignment, and cross-platform control. First, through cross-data-sourcelearning, robots can leverage massive amounts of human video fortraining, reducing training costs and improving efficiency. Second, cross-modalalignment breaks down the barrier between vision and action, enablingrobots to achieve true unity of knowledge and action. Finally, cross-platformcontrol allows XR-1 to quickly adapt to different types and brands ofrobot platforms.

Among these, X-Humanoid's proprietary UVMC (UnifiedVision-Motor-Control) technology is key. Through it, a mapping bridgebetween vision and action is established, allowing robots to instantly convertwhat they see into instinctive physical responses—naturally making correctreactive movements like human conditioned reflexes. For example: when a robotis pouring water and sees the cup being removed, it instinctively stopspouring; when the cup opening is covered, it naturally pushes aside theobstructing hand and continues pouring. This critical technological innovationgives robots "instinctive reactions," enabling themto handle the complex and dynamic real world and unexpected situations in workscenarios with ease, thereby truly achieving fully autonomous taskcompletion.

XR-1's multi-configuration pre-training capabilityenables Tien-Kung 2.0 to achieve smooth, human-likewhole-body multi-joint control, allowing it to perform deepbending and squatting while precisely grasping randomly placedmaterial boxes to complete complex material pouring tasks.Material sorting tasks require robots to accurately identify, perform high-speeddynamic grasping of parts, and execute precise classification. The lightweightVLA model fine-tuned based on the XR-1 framework equips robots with fastand precise material sorting capabilities.

In the industry's first continuous task of openingand passing through 5 doors, when the robot encounters a greenlattice gate, it actively extends both arms to adapt its structure andcoordinates with its chassis to pass through; upon meeting a bluelever-handle door, it naturally presses down and pushes; whentraversing a narrow red door, it dynamically retracts itsshoulders and adjusts its posture; while pushing open a heavy blackdoor, it maintains steady force while advancing; and after identifyinga sliding door, it glides precisely along the track. Duringthe closing phase, it can even reverse and switch between pushing andpulling strategies, all without any human intervention.This capability stems from the XR-1 model's real-time sceneunderstanding and motion prediction, giving Tianyi 2.0the truly fully autonomous operational instinct to "understandwhat it sees, act correctly, and move steadily" in complexenvironments.

Additionally, XR-1 pioneered a three-stage trainingparadigm that combines virtual and real-world data:

Stage 1: Input andaccumulate over one million instances of virtual and realmulti-platform data and human video data. XR-1 compresses these complex visualsand actions into a "dictionary" containing numerous discrete codes,allowing robots to readily invoke the needed "action codes" on demand.

Stage 2: Pre-trainXR-1 using large-scale cross-platform robot data to help itunderstand basic physical world principles, such as "objects fall whenreleased" and "doors open when pushed."

Stage 3: Fine-tunewith small amounts of task-specific data for different scenarios (e.g.,sorting, box moving, clothes folding, etc.).

This ultimately transforms the robot from a "theoreticalmaster with extensive knowledge" into a "dexterous expertwho gets things done."

This past November, the China ElectronicsStandardization Institute, based on the draft national standard "ArtificialIntelligence Embodied Intelligence Large Model System TechnicalRequirements," officially released the "Qiusuo"Embodied Intelligence Evaluation Benchmark (EIBench) and invitedmultiple top domestic embodied intelligence teams to participate in the firstevaluation. In this assessment, X-Humanoid's XR-1 model became the onlyVLA model to pass the test, receiving the CESI-CTC-20251103Embodied Intelligence Test Certificate and becoming thenation's first VLA model to earn this distinction.

RoboMIND 2.0 & ArtVIP—Building the Most RobustData Foundation for "Robots at Work"

To advance real-world robot applications, X-Humanoid isn'tjust open-sourcing individual technical capabilities, but rather constructing acomplete "XR-1 + RoboMIND 2.0 + ArtVIP" open-source ecosystem.

To address the scarcity of high-quality embodiedintelligence data, X-Humanoid launched RoboMIND in December2024—a large-scale multi-configuration intelligent robot dataset andbenchmark. After release, it attracted numerous top globallaboratories and developers, with cumulative downloads exceeding150,000. RoboMIND 1.0 contained over 100,000 robot operationtrajectories, covering 4 robot platforms, spanning 479tasks across 5 major scenarios and 38 skills,while introducing validation for 4 model types: ACT, DP,OpenVLA, and RDT.

The RoboMIND 2.0 announced during thislivestream represents a comprehensive upgrade over theprevious version. First, robot operation trajectory data has increased to 300,000+entries, expanding to 11 scenarios coveringindustrial, commercial, and household settings—including industrialparts sorting, assembly line equipment, physics and chemistry laboratories,home kitchens, and home appliance interaction. The number of robotplatforms, tasks, and skills have each seen more than 2x growth.More importantly, RoboMIND 2.0 adds 12,000+ tactile operation dataentries to support training VTLA and MLA models, canbe used to train robot large and small brain models, supports different robotsin achieving long-horizon collaborative tasks, andopen-sources large amounts of ArtVIP-based simulation datawhile supporting batch evaluation of simulation data.

As the data foundation for XR-1, RoboMIND2.0 provides massive virtual-real integrated multimodal training datasupport, lowering the barrier to model training. Meanwhile, ArtVIP—X-Humanoid'snewly released high-fidelity articulated object digital asset dataset—continuesto deliver open-source achievements. Currently, its collection of high-fidelitydigital twin articulated objects is continuously growing to over1,000 items, covering 6 major scenario types andenabling full-scenario interactive objects. This release alsoopen-sources a large amount of ArtVIP's new simulation data assets on RoboMIND2.0.

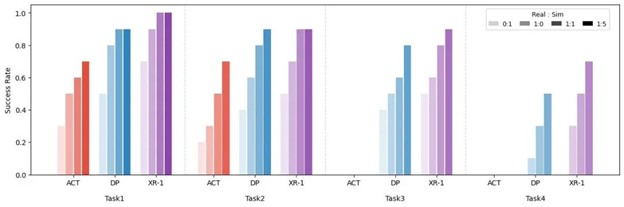

According to preliminary testing on globally leading VLAmodels including ACT, DP, and XR-1, increasing the proportionof ArtVIP simulation data in robot training can effectively improverobot success rates across different task executions. For example, inthe XR-1 model, when the ratio of real robot data to simulation data wasincreased from 1:0 to 1:5, the average success rate across 4different tasks improved by over 25%.

Currently, X-Humanoid has established partnerships withmultiple collaborators, deploying humanoid robots across various industries.For example, Tien-Kung 2.0 and Tianyi 2.0have entered Foton Cummins engine factories, autonomouslycompleting tasks such as material box picking, placing, andtransportation on "unmanned production lines,"adapting to different shelf heights and various box types, successfullycompleting the "last mile" validation fromlaboratory to real production. Additionally, X-Humanoid has partnered with the ChinaElectric Power Research Institute to deploy humanoid robots for high-riskpower inspection, and collaborated with the Li-Ning SportsScience Laboratory to conduct long-duration, high-intensityrunning shoe testing using humanoid robots. Recently, X-Humanoid alsosigned a cooperation agreement with Bayer to jointly advancethe development of humanoid robots and embodied intelligence technology inscenarios including solid pharmaceutical manufacturing, production,packaging, quality control, warehousing, and logistics.

From deep expertise in core technologies to building anopen-source ecosystem, every step X-Humanoid takes centers on the coreobjective of "creating fully autonomous and more practicalrobots"—enabling robots to work and work well. The synergisticeffect formed by XR-1, RoboMIND 2.0, and ArtVIPprovides comprehensive capability openness across models,data, and tools, allowing more enterprises and developers to focus on scenarioinnovation and application deployment rather than tacklingfoundational technologies from scratch. This accelerates the large-scaleapplication of robots in fields such as industrialmanufacturing, 3D operations, commercial services, and home services,truly propelling robots into a new era of being fully autonomous andmore practical.

Key Technological Breakthroughs

Previous Article

About the Author

Robotics Insider

Industry analyst with 15+ years of experience covering robotics and automation technologies.

View all articles